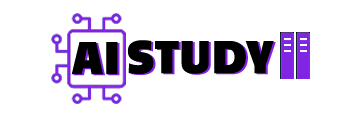

Qwen Image just added three control models. one is Canny. Depth. Inpaint. I turned them into a single low VRAM graph that runs on GGUF.

What this solves

- Lock pose and camera with depth

- Follow clean outlines with canny

- Change only a masked area with inpaint

- Keep VRAM low with GGUF Q4 when needed

I made a quick video tutorial showing Qwen Image ControlNet Low-VRAM ComfyUI Workflow inside ComfyUI. You can watch it

Update ComfyUI and add the control models

- Update ComfyUI so the new nodes show up.

- In the node search, type

qwenand pick Qwen-Image DiffSynth ControlNets. - Drop in a Model Patcher Loader. That is where you choose canny, depth, or inpaint.

- Put the control files here, then restart ComfyUI or press R:

Control models (for Depth / Canny / Inpaint)

ComfyUI/models/model_patches/

That is it. The node will list the control models once they load.

One Workflow, three jobs

I built one workflow so you do not need three graphs.

- Inpaint: pick an image mask from the list and feed it to the inpaint input

- Canny or depth: feed the image to the control node

- Switch the control in Model Patcher Loader and render

I left a small note on the canvas so it reads “one graph, three jobs.” It helps when you come back later.

Files you need

- Base model: Qwen Image checkpoint FP16 or BF16

- Text encoder:

qwen_2.5_vl_7b_fp8_scaled.safetensors - VAE:

qwen_image_vae.safetensors - Optional speed: Lightning 4 step LoRA

Here is all Model file you can download it from here

I restart ComfyUI after copying so the files appear in the dropdowns.

Checkpoint vs GGUF on low VRAM

- Checkpoint path: turn on Load Diffusion Model, pick the Qwen Image model, set Load CLIP to the Qwen text encoder, set Load VAE to the Qwen VAE

- GGUF path: turn on GGUF UNet Loader, bypass Load Diffusion Model, keep the same CLIP and VAE

If VRAM is low, go Q4.

Size picker so you do not type numbers

I added a simple resolution node.

- Feed your loaded image and it auto matches the size

- Or pick a preset that plays nice with Qwen Image

- Flip vertical or horizontal

- Type an exact size like 1920×1080

- There is a small handle to nudge size by eye

- A TV icon applies video presets like 720p, 1080p, 4K

No width and height math. Just pick and move on.

Demo 1 — Depth holds pose and camera

I load a cartoon frame. The character runs with an axe. I want the pose and the view to stay the same while I restyle it.

- Control: depth in Model Patcher Loader

- Processor: Depth v2

- Size: from image

- Prompt: keep it short, “clean cartoon look, keep the pose and the camera angle”

If you see “missing image,” plug your image into the control node’s image input and render again. If you picked the wrong patch and left it bypassed, fix the dropdown, un-bypass, and run.

How strength behaves:

1.0 locks to depth. Pose stays. The axe stays in the same hand. Background distance reads the same. Style can change, layout does not.

0.7 is guided but creative. Big shapes and camera match. Small bits can change. In my run the far house turned into a tree.

0.3 is a hint. Motion and framing feel similar. Composition drifts more

This is where qwen edit image feels steady. Depth gives you structure and you still get a new look.

Demo 2 — Canny follows the lines

I switch the patch to canny and set the processor to Canny edge. Strength sits at 1.0.

You will see a black and white outline first.

The result sticks to those lines. Pose, contours, edge shapes. My prompt did not set gender, so it picked a boy. If you need a look, say it in the prompt. “Girl in a red jacket.” “Boy in a blue hoodie.” It will follow.

This is the clean pass for qwen image editing when you want shapes to stick.

Demo 3 — Inpaint only changes what you paint

I switch the input to Get Image/Mask and set it to output an image_mask. I paint only the shirt area on an anime frame.

Prompt:

change the outfit to a T-shirt, keep skin and hair.

Render. The shirt swaps. Face and hair stay put.

Hair color is the same idea. Mask only the hair. Prompt “change hair color to red, keep everything else the same.” The rest does not move.

This is the neat pass for local fixes with qwen edit on ComfyUI.

Small VRAM preset that does not fight you

- Base: Q4 GGUF

- Lightning: 4 step

- Steps / CFG: 4 / 1

This runs steady on 6 to 12 GB cards.

That is the whole Qwen Image ControlNet GGUF Low VRAM ComfyUI Workflow. One graph. Three controls. Pick the strength, render, and your pose stays put while the style changes. If you want me to turn this into a small starter graph you can import, say the word and I will drop the nodes in the same order I showed here.